The email marketing analytics gap: by design, not by accident

Email platforms are very good at sending email. They're less good at telling you why one email outperformed another. That isn't an accident — it's a consequence of what they were built for.

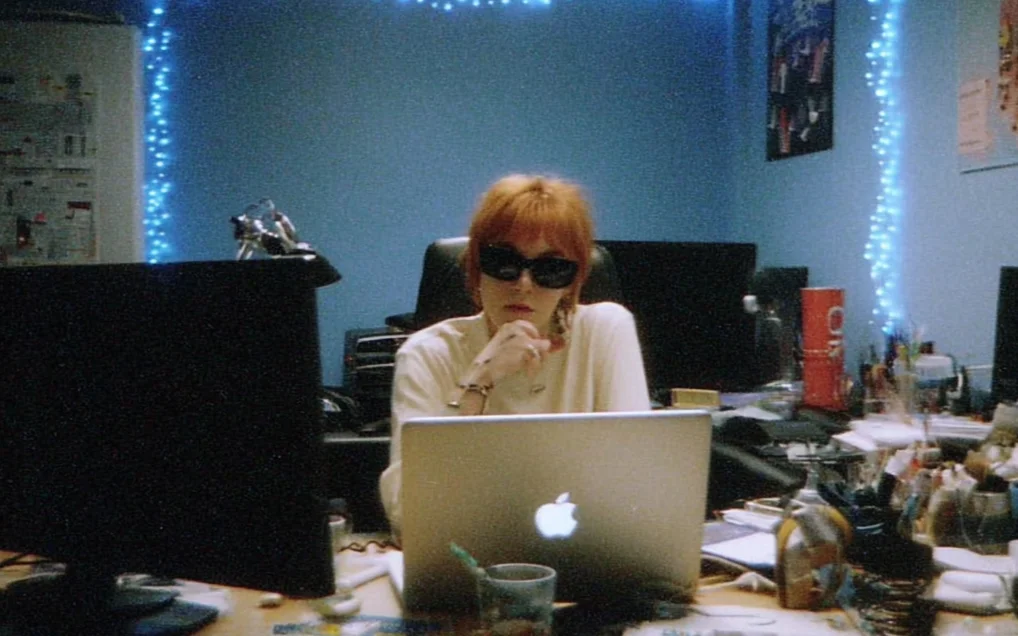

Every email marketer we talk to eventually describes the same Tuesday afternoon.

Three campaigns went out last week. One performed unusually well. Someone asks why. The answer arrives as a mix of screenshots in a Slack thread, CSV exports pasted into a shared sheet, and a quiet suspicion — "I think it was the subject line?" — that nobody has the tools to confirm or deny.

This is not a failure of effort. It's a failure of surface area. The tools the team has been given were built to send the email, not to understand it after the fact.

What ESPs were actually built for

An ESP's center of gravity is the send. Template editors, list management, scheduling, deliverability, compliance — everything is organized around getting an email into an inbox at a specific time.

That is a genuinely hard engineering problem, and the best ESPs have solved it well. ActiveCampaign, Brevo, Klaviyo, Mailchimp, Customer.io — each one takes the messy inputs of a marketing team and turns them into reliable delivery. This is the reason they exist, and it's the reason they'll continue to exist.

What happens after the send is a different product. A hard one, actually. Because "after the send" is where the interesting questions live:

- Why did this subject line open better than that one, even though they're semantically nearly identical?

- When we use a single hero image vs. a carousel, how does click-through shift across segments?

- Our automation sequence converts at 4% — but is that because step 2 is pulling its weight, or because step 5 is doing all the work?

- A competitor just changed their send cadence. Did our subscribers notice?

These are not reporting questions. They are pattern questions. And ESP dashboards are built for reporting.

Reporting vs. pattern

Reporting answers: what happened? Pattern answers: why did it happen, and what should I do next?

Every ESP gives you reports. Open rate. Click rate. Unsubscribes. Maybe a funnel if you squint. These numbers are correct, and they are almost never enough to make a decision. Because what you actually want to know isn't "what was the open rate on that campaign," it's "among the 40 campaigns I've sent this quarter, which 5 opened meaningfully above average, and what did they have in common?"

That second question requires three things an ESP doesn't give you:

- A way to describe every campaign consistently — content, layout, tone, CTA, density, subject pattern — so you can compare them apples-to-apples.

- A way to group them — so the comparison is against the right peers (a weekly newsletter vs. other newsletters, not against a flash sale).

- A way to attribute outcomes back to those descriptions — so you can connect "heavy on product photography" to "2.1x conversion lift on the segment we care about."

None of that is magic. It's just work that has historically been done by hand, in spreadsheets, by people who didn't want to do it.

Fingerprints are just structured memory

The move we made at Sendlens was simple once we saw it. Every campaign has a fingerprint. Hero image, copy length, CTA shape, color palette, density, tone, subject-line pattern — about fifteen structured fields, captured at send time, stored alongside the performance data.

Fingerprints turn your email history from a pile of screenshots into a searchable, structured memory. Suddenly "which campaigns used a single hero image with a centered CTA" is a filter, not a side project. "How did that pattern perform across our last four quarters" is a chart, not a bet.

We didn't invent this idea. Every serious creative team — in advertising, in film, in product — maintains some version of it. Moodboards. Reference libraries. Pattern archives. The difference is that most email teams don't have the time or tooling to maintain one, so the knowledge stays trapped in the heads of whoever happened to be in the room.

That's the gap we're filling. Not the send itself — the ESPs are good at that. The memory of every send, and the ability to learn from it.

What this looks like in practice

Three things change when the gap closes:

Team decisions get faster. Instead of the Tuesday-afternoon screenshot hunt, the answer to "why did that campaign perform" is already structured. Someone on the team writes a one-paragraph takeaway, links the cluster, and moves on. The meeting that used to take an hour takes ten minutes.

Risky bets get cheaper. Testing a new tone, a new layout, a new cadence is less of a gamble when you know what your baseline actually is. You aren't testing against "what we usually do" — a phrase that means slightly different things to every person on the team. You're testing against a pinned cluster with known averages.

Institutional knowledge survives departures. The email operator who ran your best campaigns last year did so for a reason. If those reasons only live in their head, they leave when they leave. Fingerprints turn them into something the next person can inherit.

None of this requires ripping out your ESP. That's the point. The ESP keeps doing what it's good at. Sendlens reads the outputs, adds the memory layer, and gives the patterns back.

Your ESP wasn't built to understand your emails. It was never going to be. That isn't a complaint — it's just a division of labor worth being honest about.